Hello! I'm Kaijing Ma

About Me

I recently graduated from Tongji University with a Bachelor's degree in Computer Science and Technology. My name carries the meaning of lush growth and thriving energy, and I am naturally cheerful, optimistic, and curious. I love exploring diverse facets of life — from arts and literature to hands-on experiments, tinkering with robots, and building quirky projects for fun. I am always eager to embrace new challenges and learn through both collaborative teamwork and self-driven exploration.

Research Interests

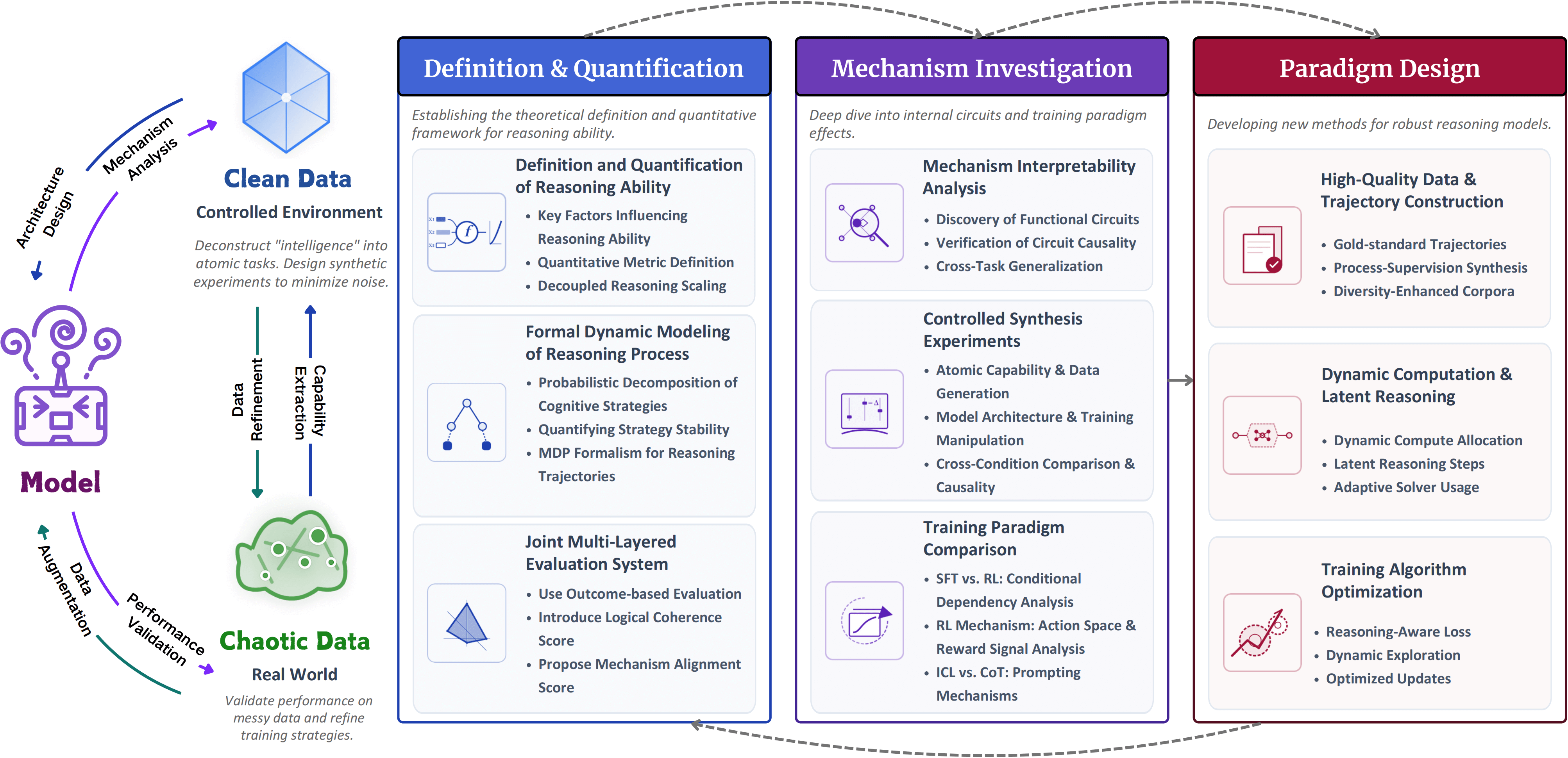

My research vision focuses on transforming the abstract concept of "intelligence" into engineering systems that are constructible, measurable, and explainable. Specifically, my research interests include:

- Natural Language Processing (NLP) — studying language understanding and generation with large-scale models.

- Reasoning Capabilities of LLMs — evaluating, enhancing, and systematically improving how LLMs perform logical and multi-step reasoning.

- Mechanistic Interpretability — uncovering the underlying mechanisms behind LLM reasoning processes.

- AI Safety — designing methods to ensure robust, safe, and aligned AI behavior.

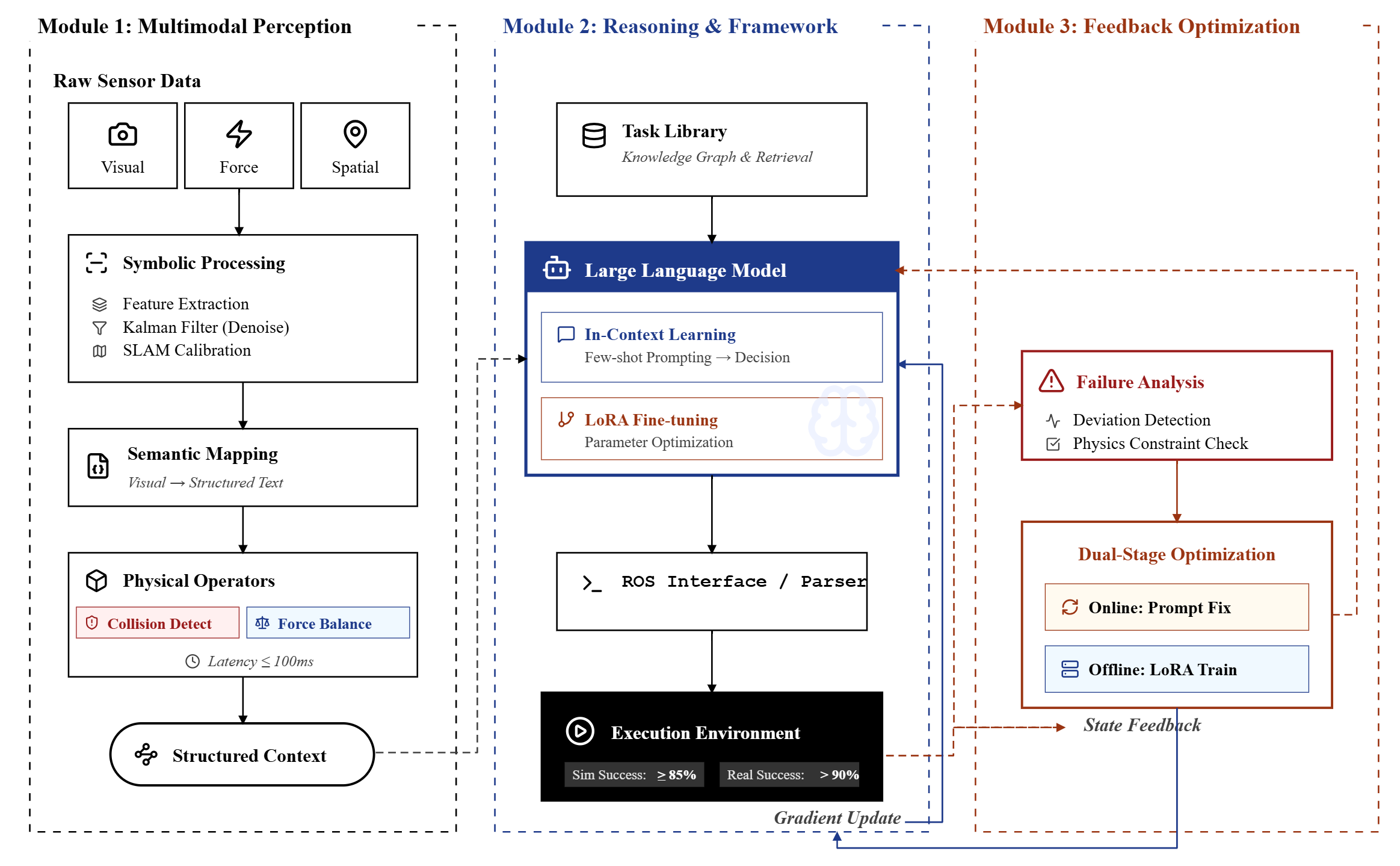

- Embodied Intelligence — exploring how AI can interact with and learn from physical environments and robotics systems.

Education

Personal Development Timeline

← Drag to scroll | Updates automatically based on current date →

Research Experience

Working on pretraining algorithms for large-scale models, focusing on optimization, data pipeline design, and scaling model training for enhanced performance and efficiency.

Worked on formal reasoning and automated theorem proving with large language models (LLMs), constructing large datasets for reasoning experiments and evaluation.

Developed MusicDSL, a domain-specific language for musical structure, and built middleware connecting DAWs with AI models.

Focused on designing benchmarks and evaluation tools to systematically assess model reasoning capabilities, and authored detailed research reports supporting team projects and analyses.

Developed cloud–edge pedestrian re-identification algorithms, implemented multi-level clustering methods, and authored patents.

Implemented a secure steganography system integrating iMEC and GPT-2.

Studied AI alignment and safety through technical readings and group discussions.

Other Experience

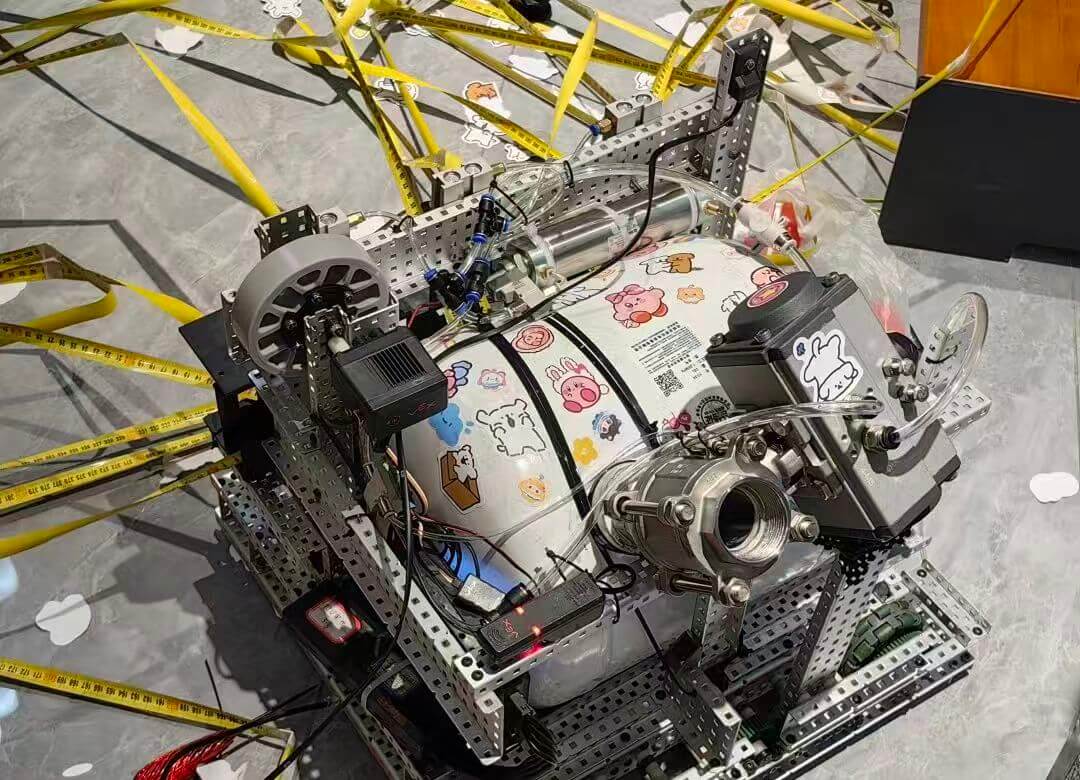

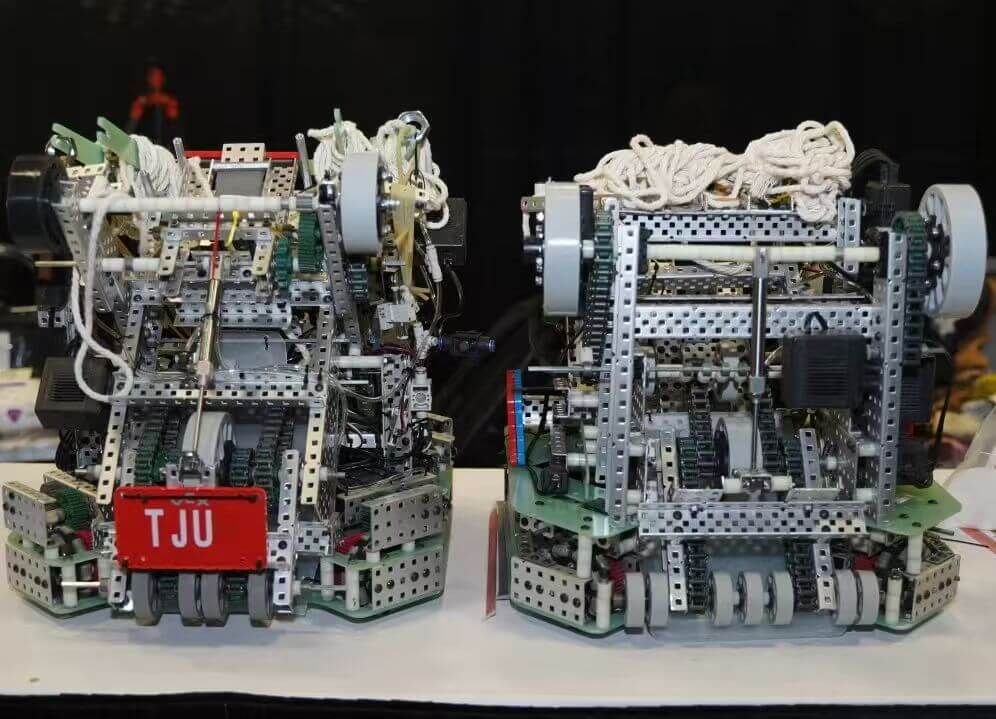

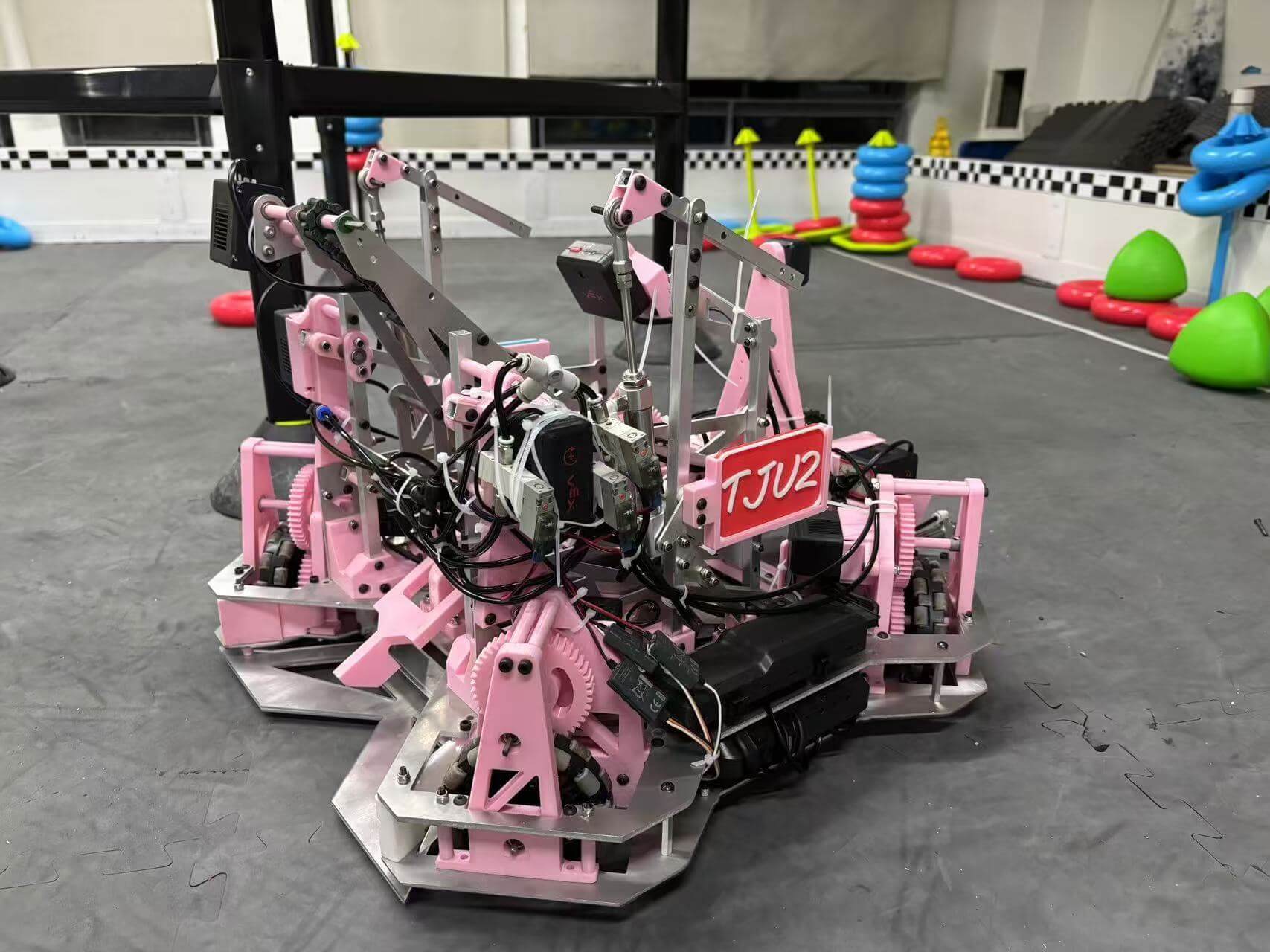

- Led the development of the tjulib library, overseeing design and coding of its functionality, and implemented an omni-directional octagonal chassis design and odometry algorithm for full-field positioning.

Publications

Listed in reverse chronological order (most recent first)

Ongoing Long-term Project

OpSynth-MI is a long-term, lead-by-me research agenda aimed at developing a systematic operator-based framework for mechanistic interpretability. Using newly defined operators, the project constructs a controllable reasoning sandbox that continuously simulates declarative and procedural knowledge from cognitive psychology, explicitly modeling dependencies between different knowledge types and exploring their compositional interactions. This series of works seeks to progressively uncover and formalize the internal mechanisms of large language models, enhancing transparency and reasoning understanding.

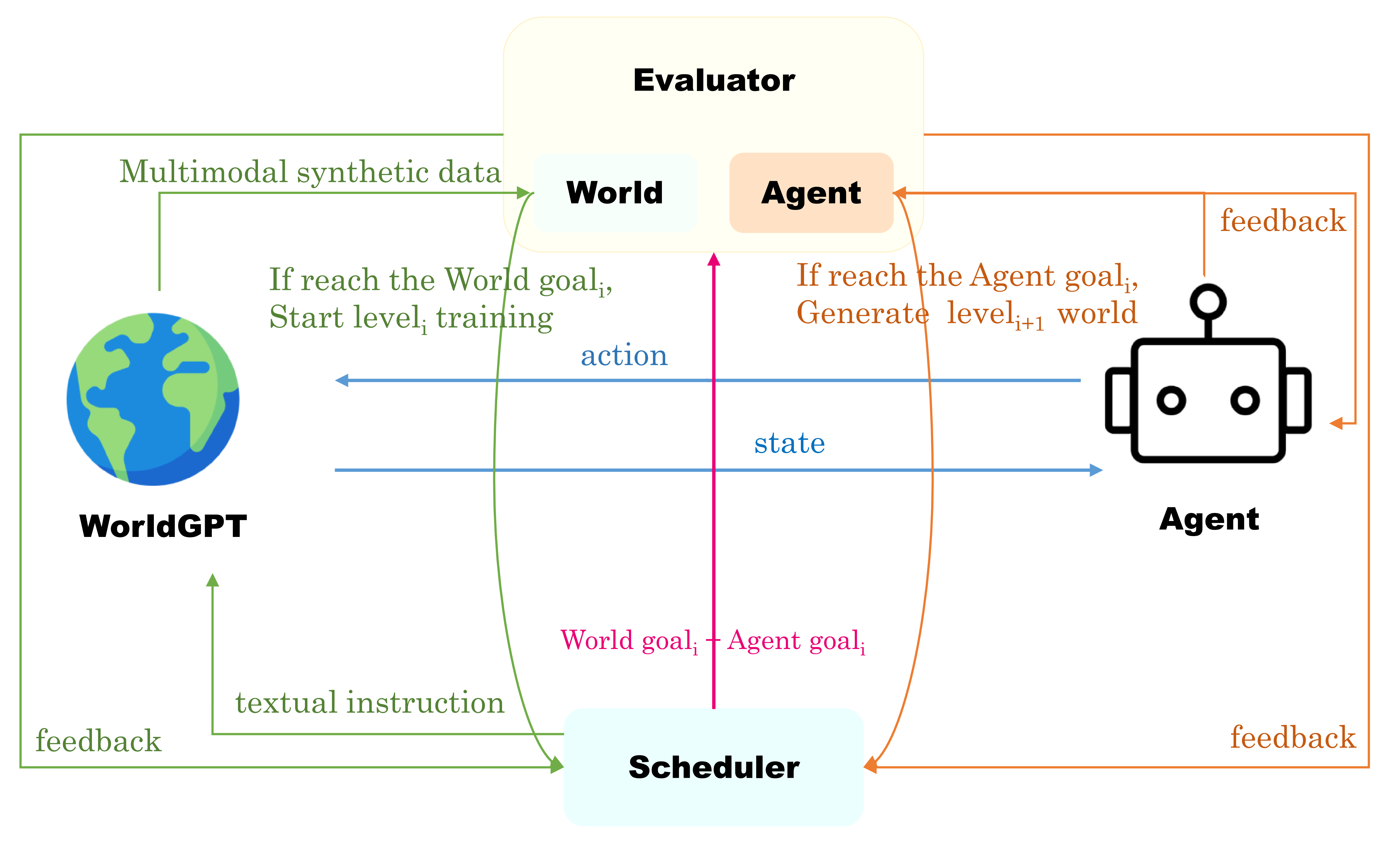

Research Vision & Concept Maps

A collection of selected visual summaries of my research ideas, conceptual frameworks, and long-term directions.

Competition Awards

Excellence Award — 2023 CCF Software Conference Robotics Large Model and Embodied Intelligence Competition Link

First Prize — Professional Track 1, 2023 AI for Brain Science Collegiate Challenge

First Prize — Creative Group, 2023 Shanghai Female Student Innovation and Entrepreneurship Competition

4th Place — 2023 VEX Robotics World Championships VEX U Design Division Link

Design Award — 2023 China University Students Intelligent Robot Creativity Competition

Languages & Skills

Languages: Chinese (Native) | English (Fluent)

Programming: Python, C/C++, Verilog, JavaScript, HTML/CSS, Assembly

Machine Learning & Deep Learning: PyTorch, TensorFlow, HuggingFace Transformers

Large Language Models: Megatron-LM, vLLM, LLaMA-Factory (SFT), VERL (RL), NNSight (Interpretability), Ray (Distributed)

Robotics & Simulation: Mechanical Assembly, SolidWorks, 3D Printing, ROS, Sensors, Basic Control